Which hundred SKUs to fix first

When a catalog business tells me "we have about a hundred bad SKUs but we don't know why" — this is the protocol that gets to a defensible action plan in five to seven hours. It catches the analysis traps that make the data lie, and only ships recommendations that survive client pushback.

Most catalog businesses have a tail of product SKUs they suspect are underperforming — slow sellers, weak margins, catalog clutter, "we're carrying dead wood but we don't know which items." The question isn't whether underperformers exist. The question is which ones, why, and what to do about each one — without spending a month on analysis or shipping recommendations that fall apart the first time the client pushes back. I built a protocol that takes a catalog business from "we think we have about a hundred bad SKUs" to a signed action matrix with confidence levels, in five to seven hours of work and about a dollar in AI queries.

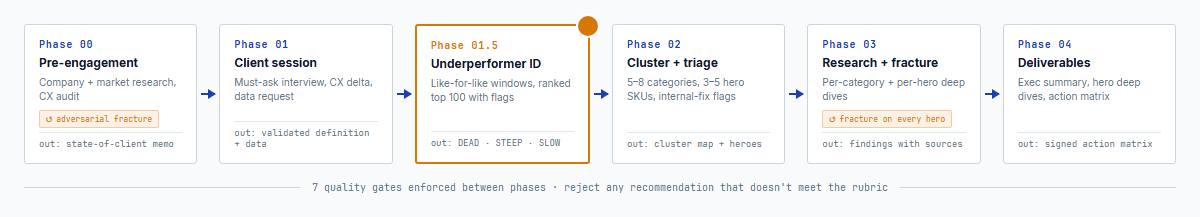

The protocol runs in six phases. Pre-engagement research and a CX audit happen before the first client call. A client session validates the data. A ranking phase produces the top-hundred list. A clustering phase groups them into patterns. Deep research runs per category and per hero SKU, with adversarial fracture at each step. A deliverables phase packages the action matrix. Every hand-off has a quality gate.

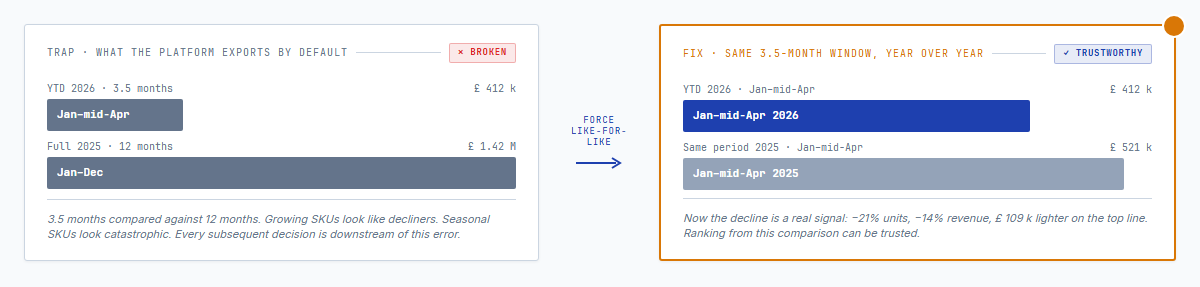

The most common analysis mistake is comparing the wrong time windows. Year-to-date against full prior year is broken on its face — three and a half months of new data against twelve months of old — but clients export their data that way because the platform default exports that way. Growing SKUs look like decliners. Seasonal SKUs look catastrophic. The first step of the ranking phase forces like-for-like windows before any decline ranking is trusted.

With the windows fixed, every declining SKU gets a severity flag. DEAD — went from real volume to zero, usually an operational issue (stock-out, delisting, supplier change) that should be investigated internally before market research touches it. STEEP — dropped more than eighty percent, usually a listing or price shock, same initial response. SLOW — dropped twenty-to-eighty percent, genuine demand or competition shift, the deepest research target. Top hundred, sorted by revenue impact, with a concentration check. On a recent engagement, the top hundred accounted for fifty percent of total declining-SKU revenue loss. Fixing those hundred moves the needle.

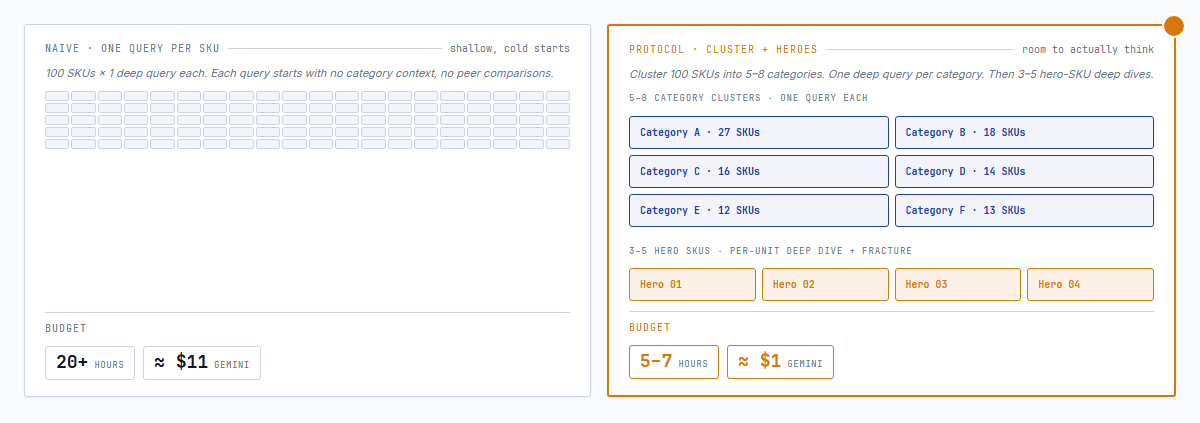

Naive approach to the research phase: one deep market-research query per SKU. A hundred SKUs times a dollar per query is eleven dollars and twenty hours of AI time, and the results are shallow because each query starts cold. Protocol: cluster the hundred into five-to-eight categories, run one deep query per cluster, pick three-to-five hero SKUs for per-unit deep dives. Same ground covered, ten times cheaper, higher quality — each query has room to actually think.

On a recent engagement the top-line numbers looked dire — a twenty-one percent drop in units, fourteen percent in revenue, over a hundred thousand pounds sterling lighter year over year. Analysis could have spent a week building a case for SKU-specific demand problems. Fifteen minutes into the Phase 1 client interview, the real cause surfaced: the client had quietly raised prices ten percent across the board between the two periods. The data was showing price-elasticity, not SKU weakness. The protocol is built around this kind of beat. The data lies about cause until you talk to the client.

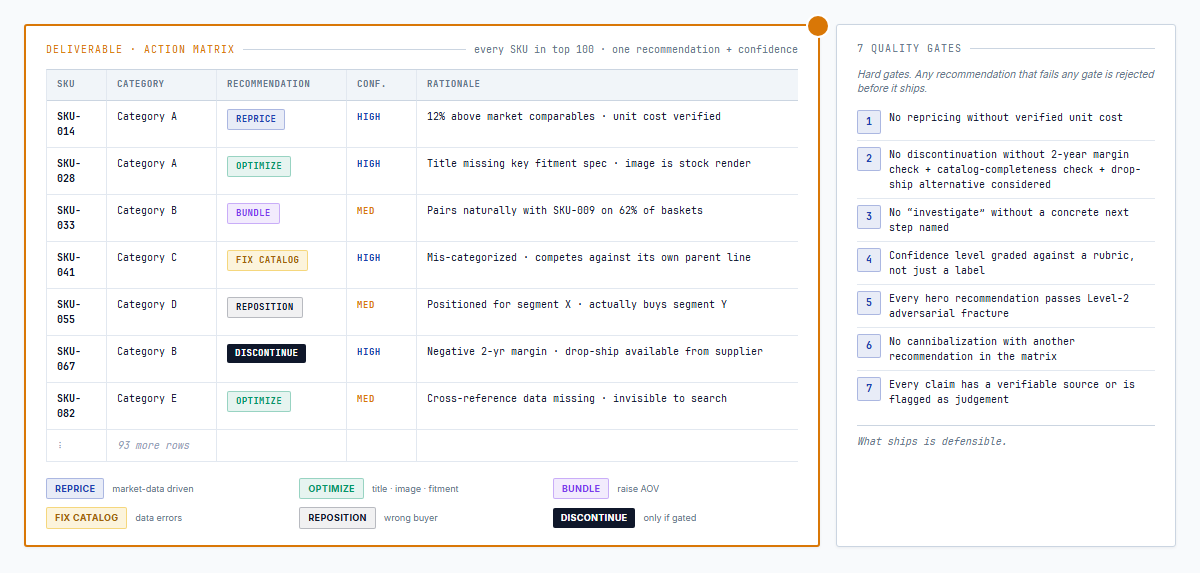

The deliverable is an action matrix. Every SKU in the top hundred gets one of six recommendations — reprice, optimize the listing, bundle with complementary items, fix catalog data, reposition, or discontinue — plus a confidence level graded against a rubric, not just a label. Seven quality gates reject any recommendation that fails: no repricing without verified unit cost, no discontinuation without a two-year margin and catalog-completeness check, no generic "investigate" without a concrete next step. What ships is defensible.

The reusable part is the protocol itself. Any catalog business — hardware, niche apparel, specialty food, specialty auto parts, industrial distributor, home-and-garden — with a web presence and two comparable time windows of sales data can be run through it. The output is the same shape every time: a ranked top-hundred, a clustered research pass, a hero-level deep dive, an action matrix that survives pushback. I'm running this on a British e-commerce business right now.

If you run a catalog business and you know the tail is weak but can't pinpoint which SKUs or why, drop me a line. I can point the protocol at your catalog and come back with a ranked list and flags within a day.